Files

-

- 300 KB

- Download

Introduction

In today's data-centric environment, optimizing the efficiency and reliability of data processing workflows is crucial. One strategy for monitoring job performance involves reviewing job statistics and cross-referencing them with log files. However, this approach can be time-consuming and requires manual effort. This article proposes a method to harness Artificial Intelligence (AI) services, such as OpenAI, to streamline tasks related to FME Flow Administration.The provided workspace empowers FME Flow Administrators to employ AI to scrutinize job logs and identify anomalies based on job statistics. This analysis can be conducted either in batch mode—for processing a specified number of days' worth of jobs—or immediately following a job's failure to complete. In batch mode, the initial step involves downloading and supplying the FME job statistics (such as CPU time, CPU percentage, peak memory usage, job status, and duration) to an AI service for outlier detection. AI models, including GPT-4, are highly adept at spotting anomalies within these metrics, thereby identifying underperforming jobs that significantly diverge from the norm. By utilizing AI services to highlight outliers in job performance, FME Flow administrators can adopt a more proactive stance in managing workflows.

Note: the workspace provided in this article was first demonstrated during the Breaking Barriers & Leveraging the Latest Developments in AI Technology webinar.

Requirements

- FME Form

- FME Flow

- API Key to an AI service (i.e. OpenAI, Amazon Bedrock, Local LLM, etc.)

Step-by-Step Instructions

Begin by downloading the provided .fmw file and proceed with this guide as we break down each phase of the workspace. After downloading, open the workspace in FME Workbench for a test run before integrating it into your FME Flow environment. Initiate the process by configuring the parameters listed under the User Parameters section in the Navigator pane:- FME Flow Host URL:

https://<YourFMEFlowInstance>.com

- API Key: abcd1234 (currently configured for OpenAI’s GPT4 - please note you can swap the OpenAIChatGPTConnector for your desired/approved AI service)

- Number of Days to Review: 3

Consider publishing this workspace to FME Flow and creating a schedule to run the job regularly. It will enable your organization to enhance data processing operations, pinpoint outliers, and diagnose job performance issues in batch mode or immediately after a job execution failure. For further details, refer to the Considerations section.

Part 1: Collect and Prepare Job Statistics

The FME Flow REST API can retrieve job metrics for up to 1,000 jobs per request. In this instance, we aim to analyze job executions over the previous 7 days on FME Flow, but this timeframe can be adjusted by modifying the "Number of Days to Review" parameter value.The workspace begins with the Creator transformer, which generates 25 features and initializes the workspace. Each feature's offset attribute is incremented by 1,000 compared to the previous one. The HTTPCaller is used to acquire statistics from the last 25,000 jobs, whether they finished with a success or failed status. Depending on your specific requirements, the number of features created by the Creator can be increased or decreased.

After the job statistics are downloaded, the JSONFragmenter and Tester identify features corresponding to jobs completed within the last 7 days. Subsequently, the StatisticsCalculator calculates the minimum, maximum, and average values for essential metrics such as peak memory usage, CPU percentage, and CPU time. Upon calculating these statistics, they are organized into a JSON feature and forwarded to OpenAI for the analysis and identification of outliers.

Part 2: Upload Job Stats to AI Service

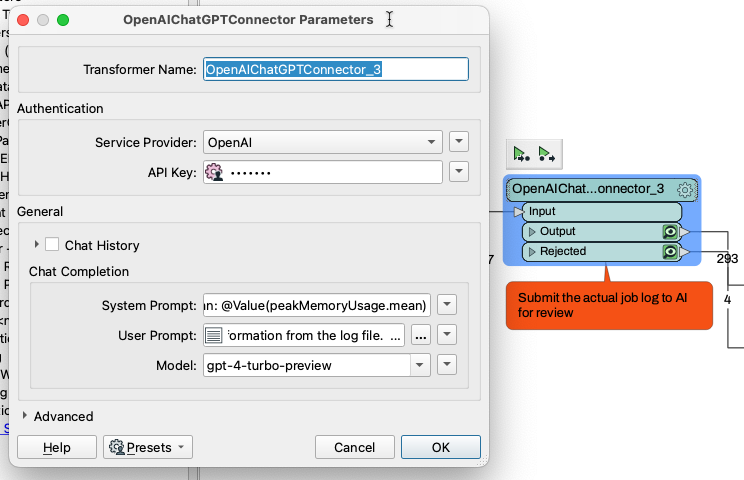

Leverage AI Connector transformers such as the OpenAIChatGPTConnector, AmazonBedrockConnector, or the LocalGenerativeAICaller to pinpoint outliers in the job statistics. It's important to note that the number of jobs you can analyze is constrained by the maximum token count your selected model can process. We use the OpenAIChatGPTConnector for this scenario to upload the job statistics to GPT-4.The following is an illustration of a sample JSON feature generated by the JSONTemplater:

In this scenario, the AI model (GPT-4) examines the job statistics to detect outliers, guided by specific criteria like unusual CPU time and memory usage. Upon completing the analysis, GPT-4 compiles and returns a list of jobs that warrant further investigation. This list is provided in the CSV format, as specified in the user or system prompt, offering a structured and accessible way to pinpoint and address potential issues within the job performances.

Part 3: Parse the AI Response and Download the Jobs Logs to Review

Upon receiving the outlier job IDs from the AI model in CSV format, a StringSearcher transformer is used to parse the response and extract only the job IDs. Following this extraction, a FeatureMerger is utilized to merge the returned list of outlier IDs with the existing feature(s).Subsequently, a Tester transformer, configured with a Contains REGEX operator, is applied to ensure that the list of returned Job IDs corresponds with at least one job ID initially retrieved from the FME Flow REST API. This validation step is crucial to ensure the data's relevance and accuracy. Once a match is confirmed, an HTTPCaller is used to individually download the job log(s) corresponding to the identified outlier Job IDs, facilitating a detailed investigation into each outlier case.

Part 4: Download and Analyze Job Logs

After successfully retrieving the job logs, the workspace uploads these logs to the designated AI model for thorough examination.Utilizing a second OpenAIChatGPTConnector enables GPT-4 to conduct an in-depth analysis of the logs to identify critical aspects such as:

- Key warning or error messages.

- Based on timestamps, determine if there are any bottlenecks in specific steps (i.e. reading data, writing data, HTTP requests, or transformation steps).

- Summarize the number of features processed per second based on the duration of the workspace and the number of features that were read into the job and written out.

- Reason for failure.

Part 5: Generate and Publish Results to FME Flow

Compiling the insights from the AI service's analysis into an HTML report will effectively communicate the key findings. This report should highlight identified issues, including warning messages, bottlenecks, processing efficiency metrics, and reasons for job failures. Such a structured report lets stakeholders quickly grasp the operational nuances and areas requiring attention.The workspace is preset to save the HTML report to the path:

$(FME_SHAREDRESOURCE_DASHBOARD)\dashboards\FlowAIPerformance.htmlThis configuration ensures the report is seamlessly integrated into the FME Flow web UI under the "Jobs > Dashboards" section, making it readily accessible to FME Flow Administrators. This setup facilitates an efficient review process, allowing administrators to promptly address and resolve issues highlighted for jobs classified as outliers over the last review period.

By default, this approach of creating an HTML dashboard report not only aids in systematically presenting AI-analyzed job performance data but also promotes a culture of proactive management and continuous improvement within the FME Flow environment.

This workspace empowers FME Flow Administrators to significantly improve system performance and preempt potential bottlenecks or failures by streamlining the job log and system performance review process. Automating the analysis of job statistics and logs with AI facilitates quicker identification and resolution of issues, thereby optimizing the overall efficiency of data processing workflows. This proactive approach minimizes downtime and ensures that FME Flow environments operate at peak performance, enhancing the reliability and effectiveness of data management tasks.

By default, the output of this workspace writes to $(FME_SHAREDRESOURCE_DASHBOARD)\dashboards\FlowAIPerformance.html allowing you to visualize the report on the Jobs > Dashboard

Considerations

The flexibility of the provided workspace allows FME Flow Administrators to tailor AI integration to their organization's security policies and operational requirements. While this template utilizes OpenAI's GPT-4 for analysis, it is designed to be adaptable to various AI services. Organizations preferring to use Anthropic Claude on AWS, for instance, can easily switch to the AWSBedrockConnector or opt for the LocalGenerativeAICaller for on-premise large language models (LLMs).

Furthermore, the workspace can be customized to target specific error messages or warnings, ensuring the prompts are finely tuned to meet your organization's unique needs. This generic template is a foundational tool for FME Flow Administrators to optimize workflows and enhance the system's performance and reliability.

Configured to operate in batch mode, the provided workspace processes a defined number of days' worth of job logs upon deployment to FME Flow and scheduling according to the review period set within the workspace. An alternative execution strategy involves creating an automation that triggers the workspace upon job failure, initiating the process from the point of log file download with the HTTPCaller_2. This eliminates the need for comparative job statistic analysis, focusing solely on the failed job's log.

Adaptation of the workspace can range from batch processing, focusing on failed jobs, or honing in on specific log file indicators, providing a versatile approach to enhance system performance and preemptive issue resolution. This adaptability ensures that FME Flow Administrators can align the workspace's functionality with their operational strategies and objectives.